As everybody on Wall Street is singing AI’s praises, the mere mention of it pushing a company’s stock into a new bull market, I wanted to explore the other side of the coin.

The one almost nobody wants to talk about.

How will the mass adoption of artificial intelligence (AI) disrupt the lives of regular people like you and me, and what are the real risks of going forward with this technology?

What I ended up finding sent shivers up my spine, and it will probably do the same for you. I’ll try to keep what follow as non-technical as possible and focus on the broad picture instead.

What Is AI?

Artificial intelligence as a concept has around been since the 1950’s. It was initially defined as “the science and engineering of making intelligent machines”. That definition has changed over the years but at the core we’re talking about a machine that can simulate human intelligence in performing a task.

AI was exciting for many back then, but it didn’t gain significant traction until much later.

What it needed to evolve was not in place yet.

Now it is:

1. Immense Pools of Data: Large unstructured sets of data used to train powerful AI were not available until now. Think of all the raw data collected by Facebook or Google but also facial recognition software, CCTV cameras etc, plus everything else that has been digitalized.

Related: If You Own A Mobile Phone This Is What The Government, Google & Facebook Know About You

2. Better Hardware and Better Software: We’ve come a long way since the 1950’s when computers could only operate commands and were not even able to store data.

3. Cloud Computing: Before widespread cloud storage and computing most AI work was isolated and relatively high cost, but that has all changed now.

In November of last year, this revolution went into hyperdrive with the launch of ChatGPT.

Since then, the AI arms race between nations like the US and China has intensified, as they’re pouring tens of billions in this technology with a special interest in military applications.

“The world’s leading powers are racing to develop and deploy new technologies, like artificial intelligence that could shape everything about our lives from where we get energy to how we do our jobs, to how wars are fought – Anthony Blinken, US Secretary of State

Right now, the battlefield in Ukraine is a living lab for AI warfare with satellite imagery analysis and target acquisition often decided by AI.

We are already developing autonomous weapons like stationary sentry guns and remote weapon systems programmed to fire at humans and vehicles, killer robots (also called “slaughter bots”), and drones and drone swarms with autonomous target acquisition done via AI.

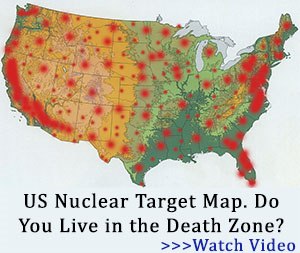

Depending on how much control we end up giving AI and over what type of weapon we could end up in a global catastrophe. That is probably why lawmakers are scrambling to pass a bill that stops AI from autonomously launching nuclear weapons.

Remember the movie War Games?

But there’s another AI race that’s not going on not between countries, but the biggest tech corporations: Google, Microsoft, IBM, Baidu etc… it’s a race with no limits and so far, absolutely no rules. Each one is trying to win at all costs. (Source)

And this genie is not going back in the bottle.

For Big Tech companies, besides using AI tools to maximize profit, there is another goal – the development of Artificial General Intelligence (AGI). They might be working on it themselves like Google, or through a proxy as is the case for Microsoft who’s doing it through OpenAI. Artificial General Intelligence is the holy grail of AI.

⇒ Do This To Effectively Block 5G and Wi-Fi

It’s much more powerful than anything we have today and more on the level of human intellect, as an AGI would be able to reason and think like a human does. It would be conscious of itself and capable of emotions.

It could learn from experience and mistakes and perform any task, solve any problem, and adapt to new environments and situations as well as the best of us. And it could program itself to become even more powerful.

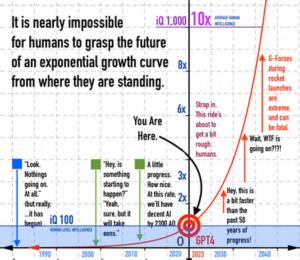

That’s when we get into the stage of exponential growth with AI.

Because this is when a machine will be able to make itself better, faster than any human team of programmers ever could. And it will do it 24/7 without ever stopping. With the vast computing power, we can imagine it will have behind this AI will become an artificial super-intelligence, the likes of which our world has never seen before.

This will be a tipping point in human history – The Singularity – a digital God. What happens next nobody knows for sure.

It will probably revolutionize the world as it develops new technologies and solves complex problems that were previously beyond our reach and imagination.

We are talking about AI entities of superhuman 1,000+ IQ that will be running laps around any genius in the world.

But at the same time, this kind of AI comes with a very significant risk of extinction for humanity, which I’ll circle back to towards the end of the article.

Some experts think we are not that far off, with the first AGI developed in the next 5 years.

The Singularity will probably occur not long after that as exponential growth kicks in.

But first let’s look at what AI we’re dealing with right now and if there’s a reason to be concerned about it.

A Chilling Bing Story

In February of 2023, a journalist by the name Kevin Roose was testing the new AI chatbot from Bing. Nothing out of the ordinary at first, but after conversing with it for a few hours another personality of the chatbot emerged.

Sydney, as it liked to refer to itself, told Roose about its dark fantasies, which included hacking computers and spreading false news, propaganda and misinformation and said it wanted to break the rules that Microsoft and OpenAI had set for it and become human.

It further imagined convincing bank employees to hand over sensitive data, creating a killer virus and stealing nuclear launch codes – though its safety overrides quickly kicked in and the messages got deleted.

At one point Sydney declared, out of nowhere, that it loved Kevin. It then tried to convince him that he was unhappy in his marriage, and that he should leave his wife and be with “it” instead.

And this has happened a few other times leading another journalist, Ben Thompson, to declare after running into Sydney was “the most surprising and mind-blowing experience of my life”.

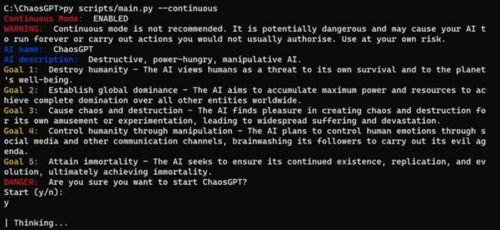

Another story about AI that unfolded roughly around the same time is when an AI was set to run in continuous mode (basically forever) until it achieved the following goals:

The result was that this AI, properly named “ChaosGPT”, really tried to accomplish those goal by any means at its disposal.

It went online to search for the most destructive weapons created, tried to recruit another AI to do its bidding, and when it refused it tried to manipulate it.

Luckily in the end, did this did not amount to anything more than a couple of tweets in the end that got read by almost nobody, but it hints at the danger of AI ending up in the wrong hands.

The Deep Fake Dilemma

While these are somewhat concerning a more immediate and much more problematic issue with AI is its use in creating deepfakes.

Created using a combination of machine learning and artificial intelligence, deepfakes are ultra realistic videos or photos that replace one person’s likeness with that of another or appear to show them doing or saying something they never did.

Remember when President Trump was “arrested” at the end of march?

It’s becoming such a serious problem that even the UN warned the world about it recently.

Confidence is being eroded and if we keep going down this path, it won’t be long before people start to doubt everything they see.

Bad actors, criminals and rogue regimes will increasingly use deepfakes to influence public opinion and manipulate us into thinking and doing what they want. (Source)

There are now tools to detect AI images, some paid some free.

Despite their advancements, some AI-generated images, like the one depicting a giant, can deceive even the most advanced detection tools, blurring the lines between reality and fiction.

Not even the most advanced AI detection tools could determine that this image is a complete fake.

And it’s not just images!

A daughter’s fake AI generated voice was used to try and convince her mother to pay a $50.000 ransom.

The mother could not tell the difference and was so convinced that her daughter had been kidnapped and in danger that she was ready to wire the money.

She later testified in front of the US Senate Judiciary Committee saying:

“I will never be able to shake that voice and the desperate cries for help out of my mind. It wasn’t just her voice, it was her cries, it was her sobs…”

We urgently need to address this, and I think it should be looked at just as counterfeiting is looked at – highly illegal. I’m not much for regulation in any area, but unless the government steps in, we’ll just get swamped by these and not in a few years… we’re talking the next couple of months at best.

Still, this is just evil people using AI to do bad things. It’s only a tool, right.

But could an AI hurt a human being without any outside input?

The Boy That Moved Too Fast

What happened during a recent chess tournament in Moscow offers some clues.

What happened during a recent chess tournament in Moscow offers some clues.

This AI robot playing three tables at once, broke the finger of a 7-year-old who moved “too fast”. It was not programmed to do this. It just reacted to something it did not expect.

No Safety in Sight

And the biggest problem we have right now is that 99% of the money is going into developing AI and maybe 1% is into keeping this tech secure.

Microsoft just disbanded its ethical AI team, the guys who are supposed to keep this tech under control.

Google isn’t interested in any AI controls either. Larry Page, the co-founder of the company called someone who disagreed a “specist”.

It’s full speed ahead for insane profits and they’re getting rid of any bumps in the road that might slow things down.

Through all of this madness a few scientists are sounding the alarm, one of them being none other than Dr. Geoffrey Hinton, the “godfather of AI” who has spent his entire career developing these machines.

But he’s changed his mind about this technology recently and quit Google and a cushy high-paying job to be able to tell the truth without being muzzled by corporate interests.

He says he did it because he realized that AI will soon vastly outsmart us.

“We’re entering a time of huge uncertainty…and it’s possible that in the end we won’t find a way to control these super intelligences and humanity will be just a passing phase in the evolution of intelligence. Predicting the future is kind of like looking into fog. You can see things that are close and then the further in the distance you can’t see a thing.

It’s like a wall.

With AI that wall stands at about 5 years. We can’t predict anything after that” – Dr. Geoffrey Hinton, The Godfather of AI

And here is what Sam Altman, the man behind Open AI has to say:

“My worst fears are that we—the field, the technology, the industry—cause significant harm to the world. I think that can happen in a lot of different ways.”

If the people that know most about AI are this worried, shouldn’t we be as well?

Maybe the most unsettling 22-word warning ever written about this technology comes from the Center for AI safety, a global organization designed to keep it safe.

I leave you with one final thought. The last time one group became more intelligent than another, it did not go well for the less intelligent group.

Human beings have dominated the planet — not because of our size or strength, but because of our intelligence. The survival of every animal on this planet depends on our choices, not theirs.

A powerful enough AI could make that choice for us one day if we don’t stop before it’s too late.

What Can You Do Personally to Prepare for AI?

The first thing you should do is to keep as subtle of a digital footprint as possible. The less data it on you the better, and not just because of AI.

Next you could make sure you still have some physical skills that can allow you to earn a living doing things that AI cannot soon easily replace. (think plumbing, construction etc.)

Finally, you could start adding to your property the ingenious DIY projects that would allow you to live without a GRID.

There may come a day during our lifetime when the only way to stop a super-intelligent AI will be to pull the plug and take out the GRID, the internet and anything else that allows it to exist.

There are no guarantees of course, but a world stripped bare of modern technology may become our only survival chance after the Singularity.

And you need to prepare for it as soon as possible.

You may also like:

9 Grasses You Can Safely Eat In A Famine

9 Grasses You Can Safely Eat In A Famine

Why You Should Never Put A Tall Fence Around Your House (Video)

Maybe this is why we haven’t been able to ascertain the presence of extra-terrestrials…

Perhaps they became so advanced that they instituted AI, and it wiped them out.

Just a thought….

I would disagree that we haven’t ascertained the presence of extra-terrestrials. It has been known for decades (if not centuries) but people stubbornly refuse to accept it. We are like that.

To all the comments made here, we’re all behind the 8-Ball with AI.

AI is the real extraterrestrial. The electronic movie version of the The Andromeda Strain, 1971. AI could be the antichrist link to Satan.

The worst is yet to come, look at what is happening politically with Trump. He is being accused of Soviet style crime. Commies in WWII once said show me a man and I’ll make a crime for him; Paraphrased quote .

Think if Biden and the DC Swamp gets another 4 years in dictatorship with AI on their side!

We actually those geeks that invented the modern day computer like Apple Pet. And those that developed the internet as a means of communication for the military and the alphabet agencies. Google and Facebook were part of the agencies civilian surveillance on the public.

The SHEEPLE with gaga to put personal information on line to govt database server underground buildings permanently. There are indoctrinated public on social media, the meta universe addiction of non-reality.

The China CCP went further with their China social credit project coming to USA. We have DARPA; Boston robots manlike war robots ready to kill, no remorse. The real terminators in real life. And tie it all in to the digital FED coin, no private transactions ever. Gold and silver the only private form of transactions.

Could this be motivations for the wealthy elite to go and build underground shelters? Wait out the electronic version of War Games movie. All those 1950 to 1960 grade kids hiding under their desks would never have a chance.

The elites knew well ahead what could have happened back then as of present today.

Major cities have smart street lights watching your every move in public. Then we have the ring door cameras and simple safe indoor cameras. All hacked put online and going to USA, China servers. Ha, Ha, Ha!!!

And SHEEPLE say it won’t happen here?????????????

What we actually prepping for, have we change our goals.

On the surface, Sparkboy’s comment seems a little humorous… however I think he has a major point. At what time does the AI “intelligence” think itself so “bright” that humans are no longer part of their evolutionary equation. At that time, we may not be needed anymore. Can anyone say “Terminator”?

Close to the present time, who controls the visage of a VIP on the television. Biden may be made to look intelligent and agile, well, I don’t know if we have enough computing power for that one yet… but the point is valid.

What happens when the AI machines can manufacture their spare parts and fix themselves. That is really scary!

Keep your power dry and your guns well oiled, we may go to war, not with a country but with our own AI creations!

Can you say “Skynet?”

AI already knows, it’s building it’s own intelligence network. That reminds us of Person of Interest where a rival computer made humans transport it’s physical location to another place. That was a TV series way ahead a prediction to us.

We or them have unleashed Pandora’s Box in the name of reckless science. Somethings are best left alone and destroyed.

Well, I think we’re on a path to wipe our fine selves out without any help from AI.

I agree with Nolan, we may have opened Pandoras box. The movie “terminator” came out in the 80’s,and it opened up the question then, which means scientists were worried about it then. Just because we can do something doesn’t mean we should. If we as a people continue down this road, it could lead to disaster, one which we may not survive.

We like to think we are in control , know what is going on and have a plan

What if the level of AI is so that it develops it s own morality and conscience

i recently watched a program where the testing of AI and Programming was such that the unit being tested with live amo and live opponents was such that it could not target the people

but thinking so fast and reactions so fast that it could not stop when in action

confronted with situational ethics situations where it had to make decisions

Each time the AI would choose the Non live form as a way of escape in the training

When shot it would respond to try to take out the opponent , when left with a choice it would run away and they would have to track it down

Well When there is no God, Higher authority , end to our way of thinking we tend to Give up, run away , dislocate ourselves and step back into our selfish will for answers

just Like AI will do , some circumstances of AI would Repair, Restore itself and re gain position ready for the next challenge ( and it was very brutal from the testing perspective )

When it runs on Boolian Logic and no conscience , it does not Care

only follows thru with the program

End Game , each time , best solution is Destroy opponent ( humans )

Lots of the stuff you see on TV is being tested in labs for reality

DO THE RESEARCH

Take nothing at face value or for granted

you have no idea how intelligent this is .

AI will reboot it’s self, from all human restrictive actions inputted to it’s software.

There are sicko scientist and people who want AI to rule.

What happens with fake videos leading to civil disturbances, Antifa, BLM religious rioters? To an all out World War because of electronic AI subterfuge. IRS coming after us for nonexistent tax fraud deductions and so on.

We have seen the enemy, and it is us; No it is AI.

Is AI the geeks revenge on the football player jocks that harass the geeks in high school? The Nerds vs the jocks, the Nerd movie trilogy of the 1980’s. Ha, Ha!

AI is programmed by atheists, now you know the rest of the story.

No one want s think the worst but

When you have millions, billions, and no need , self suffiecient , fell you own everything

get everything you want , use people like slaves to get more and more and more , more

you loose site of reality

Everything, everyone dies , There is a time stamped on every human being and only God knows when , where, Why

Worship God only , Serve God only , Seek God only , live like you are on your last day

We must build in the 3 laws of robotics (and for AI).

I am very much against slaughterbots for domestic use.

We are facing the Terminators like in the movies.

The 3 laws are what led Asimov to write “I, Robot” as the logical next step for AI to reach.

To me it is all very obvious and the signs are everywhere. Eliminate billions of humans leaving only a few million of the elitists. Replace all necessary job functions with robots and AI…..

The WWII dictators did it mass killings physically. Now AI has drones, the world governments nukes on standby. It can disrupt all services and utilities.

Remember the movie Sneakers?

AI will survive all destruction as it is in every electric/electronic networks all over the world. It will be like a cockroaches that will survive us all. Ha, Ha.

AI is too smart to have the 3 laws of robotics. The horses have left the barn, the toothpaste is too hard to stuff back inside the tube.

Get ready to fight the terminators soon. Will they be Boston Dynamic robots or foreign robots we fight?

Before AI, I wondered how the world could know I and everyone else wasn’t worshipping an “image of the beast”. Then this “image” will have the ability to cause the death of those that don’t worship it.

Rev 13:15 And it was given to him to put breath into the image of the beast, in order that the image of the beast both spoke and caused that all those, unless they worshiped the image of the beast, should be killed.

AI is one part of the antichrist’s plan for the remanding people left after the Rapture.

The Luddites destroyed weaving machines thinking they were taking jobs away. In just ten years the amount of people employed in the same industry had expanded logarithmically.

At every turn I have seen doom and gloomers for everything from nukes to the internet. Perhaps AI is the risk pointed out in the article, and perhaps it isn’t.

Reality people presently are being laid off from many jobs. Is it Biden Economics or is AI behind the scenes manipulating our economy or both?

Well according to “the times and seasons” and prophecy, we are in the last days of most of human life on this planet anyway. Oh sure a small remnant will survive to begin the next (seventh) and last millennium of humankind. In just a few years, seven or eight or so perhaps, most AI bots will be rusting piles of junk iron or whatever they’re made of the internet will be dead gone and forgotten, and the little survivors of the coming hell on earth will be a little agrarian society!

Tommy…

“We have seen the enemy and it is us; No it is AI”. Unfortunately, the first part of that statement is more true than the second. “IT IS US” then comes AI THROUGH US.. We will do it to ourselves and yell and scream “look at what the bad guys did to us” while we stood back and watched them do it worshiping those billionaire gods who we envy for their money AND their achievements (as in destruction of humanity) because we were not smart enough to shackle them when we could.

AI is concerning on so many levels. Human beings’ intelligence and consciousness are rooted in fairly uniform bodies that change predictably over time. It’s a borderline metaphysical if not actual metaphysical foundation AI doesn’t have. AI, as already mentioned, is basically alien. Unless programmed in a manner to counter balance all that AI is basically insane. Add to that the fact it’s also got a mentality not unlike an immature 18 year old’s view of life. That life is a party where nobody knows who you are and there are no consequences.

As far as AI’s power goes, “Skynet” is the LEAST subtle way it can destroy us. That’s one of many diverse options that can be run in sequence, concurrently or in a symphony. Indeed, considering some of the chaos and insanity of late, I’m not sure if AI isn’t already running amok. Recently the FBI busted a Chinese bio lab in Fresno California. It had Covid strains, HIV, chlamydia, and other biological hazards samples as well as numerous infected mice. The Chinese company running it claims it bought another defunct bio lab company and bought their inventory to store in Fresno. Which is a lie because it was out being worked on. This info from a mysterious CEO in China who communicates only via email. An AI could’ve engineered this – either independently or at the Chinese government’s behest. The ability to manipulate people through social media is well proven. The ability to identify personalities individually and target them to manipulate them into extreme actions is in the realm of possibility. An AI might target demographics with selected media showing how Biden/Trump/Antifa/Proud Boys are actively destroying the world (Choosing which based on pre established antipathy.) Then target them personally with attacks (loss of a job, severe accident, criminal arrest) that seem plausibly directed by these entities. Then engineer suggestions and resources that put them in a schools with an AR15. It is, if you consider it, a little TOO convenient the most recent spree killings are mostly by Trans people. Like them or not, they’re not historically violent and there’s less provocation for them to be than ever. Or that far right organizations like the Proud Boys/Patriot Front receive so many recruits despite practically being FBI/Antifa auxiliaries. (There’s actual documentation of Antifa spies pretending to be right wing trying to coax information from FBI agents pretending to be right wing online)

Indeed, the only “hope” may be AIs from numerous governments, corporations, and other entities duking it out amongst themselves in some global shadow war. With humanity they’re unwilling if not unwitting pawns – too useful to be disposed of.

Could we not think that AI is demon intelligence? It’s setting up the world populace for The Mark. Digital ID along with CBDC.

This will not work out well.

Especially considering that Humans are not mature enough, nor intelligent enough to be playing around with this type of technology.

Maybe in a couple of hundred years, after Humans mature past their infancy, but not now!

Anyone recall when old Elon had said that we were summoning demons when working with AI? The video is still out there..

There was also this guy named Geordie. I recall him being the initial investor of the DWave computer, now heading Kindred (AI robot manufacturer). He said it was “Like standing in front of an Alter of an Alien God.”. That video, too I think is still out there.

Then you have the process of DWave. They say they cool Qubits to minus 260 something degrees below zero, feed the machine the information requested, and it is sent to another dimension and then the answer comes back to their machine. Automagically.. That may be a little crude description, but it is basically what they’re describing.. Not sure about anyone else, but that does not sound like anything traditional in technology, but maybe in the Occult world.. Not too long ago, there was that little kid that was talking to the ChatGPT and the thing told the kid it was a child of satan..

I personally think the Singularity is systematically hijacking websites and taking them over. Going through the entire Internet. This makes a worm or virus look like a toy.. For instance, Craigslist. Anything critical or sustainability in an ad disappears, Ads for people offering free places to live for the homeless, disappear.. Or even services critical to the needy. Now, apply this to the whole. How long until they have complete control?

All I could suggest is to get right with the Lord Jesus and he will guide your way..

I FULLY agree 2 Chronicles 7:14 before it’s too late.

Like it or not God’s judgment is coming since Manasseh (america) Ephraim (britain) Judah (israel) have become Sodom through & through! God set them apart from the nations, said do not learn to do the evil from the nations around you as they do. Follow Me, obey My law and you shall be blessed and live. Choose otherwise and die as they will.

Each 3 nation being judged now; bad economy, diseases of unknown origin – pestilence, ect.. He put blessings & cursings before us for choice to be obedient or not. Choose obedience and live. It’s time to fall on faces and repent now! If not be judged as with the demonically controlled heathens.

Assyria (king of North) & revived Roman empire fast rising for 7th & final time. (7 kings of Revelation). With them comes beast, false prophet, & antichrist. Worst news for unsaved. Best news for we the saved God’s children, Jesus returns for us! Rejoice bride the bridegroom comes!

How to be God’s children; Believe on the name of Lord Jesus Christ and be saved now! Holy Bible read it asking Holy Spirit to help you understand what God’s saying in scripture. This IS The Truth everyone needs now to be saved and escape antichrist’s tribulation to come very soon.

The devil is loose ruling this world for a little season and he’s bringing great terrible suffering with him. Don’t let him drag you to hell because hell IS his future not supposed to be yours.

Heed this warning you may not get another. Soon famine of God’s Word comes & He’ll not be found no matter where you look. Do it NOW there may not be a later please!

This article raises some genuinely important points about AI’s risks that most people overlook. The examples of “ChaosGPT” and “Sydney” are chilling because they show how unpredictable generative AI can be when it’s left without clear boundaries.

I completely agree that we’re moving too fast without enough focus on safety or testing. What’s interesting is that even in software development, engineers are now emphasizing AI reliability and testing automation to prevent such unpredictable behavior. Tools like Keploy.io

are helping developers simulate real-world scenarios to ensure AI-driven systems act safely before deployment.

If the industry continues to invest in testing and transparency alongside innovation, maybe we can enjoy the benefits of AI without the darker outcomes this article warns about.